The Bots Are Reading Along

On AI audiences, intellectual immortality, and why the "why" of writing matters more than the "who".

It’s been a little while! At the beginning of the month I got pretty sick, though admittedly it was nice to take my first publishing break in 3 years. But don’t worry: I’ve got more stuff in the works, including a Codex Basics livestream this Friday! If you’ve been meaning to try out AI coding agents but haven’t had the time, this is the stream for you.

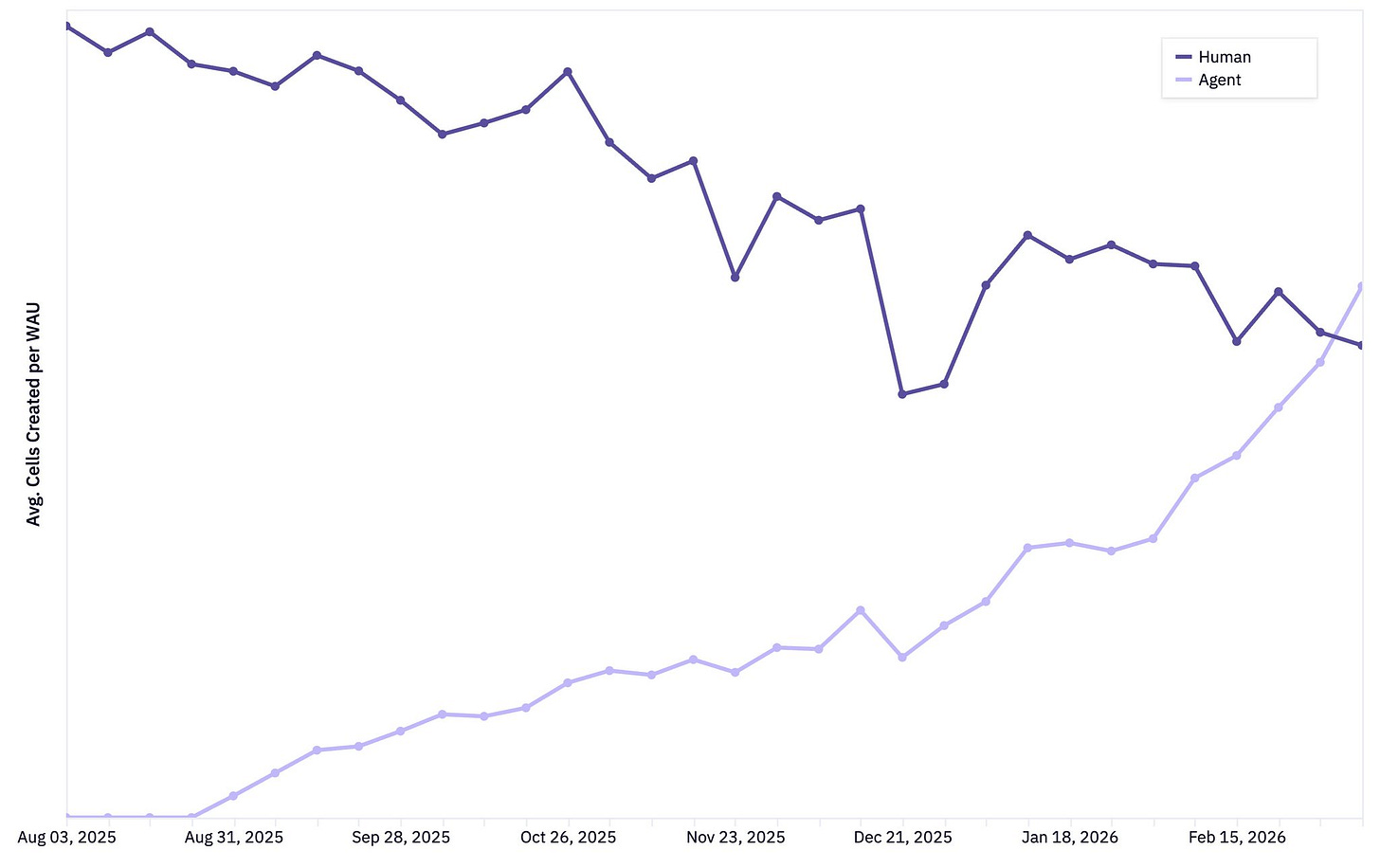

Yesterday I saw a tweet from Barry McCardel - the CEO of analytics platform Hex- which included a graph showing that agents are now creating more Hex cells (basically dashboard components) than humans are. Not “almost as many” or “a growing number.” Just more.

So I started digging, and it turns out that in February, Mintlify - which builds documentation platforms for developer tools - launched an analytics feature specifically for tracking AI agent traffic to your docs. Their framing was blunt: “AI agents are already reading your documentation. In many cases, they are reading it more often than humans.”

Granted, these aren’t all the exact same phenomenon - some of this is AI agents doing real work, and some is crawlers hoovering up training data1. But they point in the same direction. For certain categories of content, we have already hit a tipping point where humans are no longer the primary consumer.

And that raises a question I’ve been thinking about more and more: if more bots are reading your work than humans, what does that mean for how you create?

Brought to you by Claude

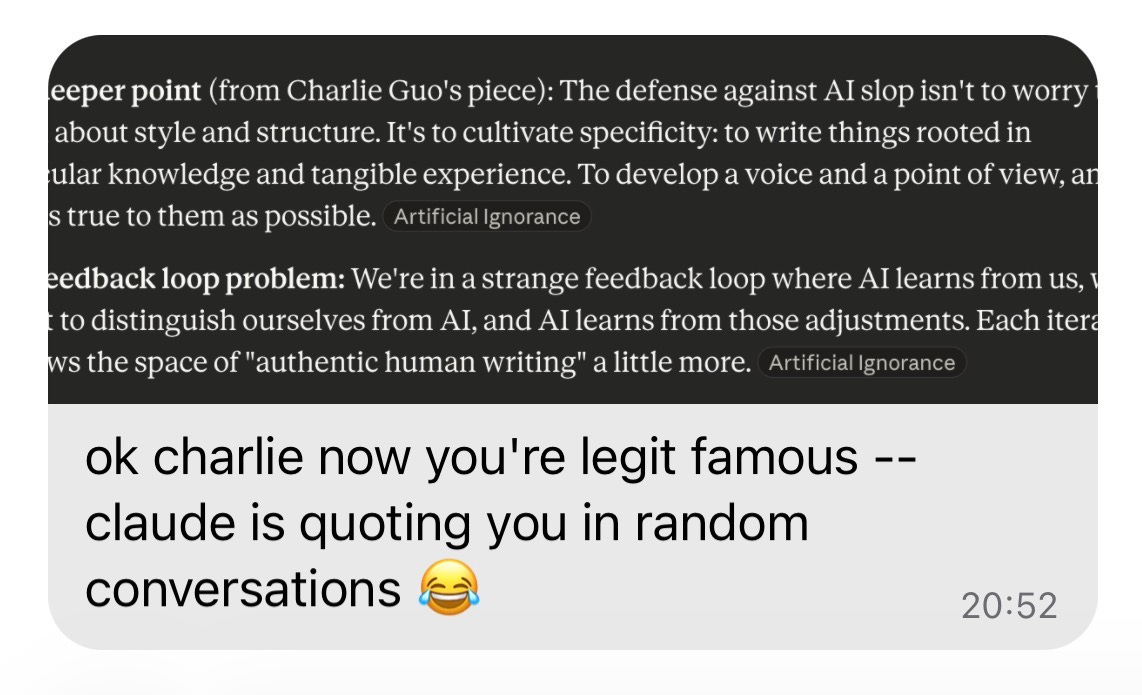

For me, the question became personal when a friend told me that Claude was citing my work. Not in an abstract, futuristic-sci-fi sense. In a literal, someone-asked-Claude-about-AI-slop-and-it-linked-to-Artificial-Ignorance sense.

This isn’t something I optimized for; I can’t say I’ve ever written a post with AI legibility in mind. I wrote them because I was trying to figure out how to spot AI slop, or why models might be getting dumber, or what the difference was between skills/tools/MCPs.

But it turns out that writing with authority and specificity is a great way to get noticed and surfaced by the chatbots, which tracks with what I found when I did a GEO (Generative Engine Optimization) deep dive last May2. The tactical advice for getting cited by AI systems mostly boils down to doing good work and making it findable. From the piece:

Write good content - AI models and search algorithms alike prioritize high-quality information.

Follow E-E-A-T (Experience, Expertise, Authority, and Trustworthiness) best practices, which Google has long championed.

Ensure your content is well-organized, easy to navigate, and quick to load: i.e., care about user experience.

Work on backlinks and citations from reputable sources.

Good GEO, it turns out, is mostly just good writing with good SEO.

But chatbot citations are just the tip of the iceberg. Last year, when I wrote about GEO, the main way your content interacted with AI was through search - someone asks ChatGPT a question, it retrieves your page, it cites you in the response. Manageable enough, right?

What’s become clear since then - through the rise of OpenClaw, MCPs, and the broader push toward personal AI agents - is that we’re headed toward a world where much of the productive browsing of the internet is intermediated by AI. Not just search queries, but research, comparison shopping, summarization, and decision-making. The stuff we used to do ourselves, because there was no alternative.

And if that’s where things are heading, then “how do I get cited by ChatGPT” isn’t really the right question anymore. The question is bigger: what does it mean to write in a world where AI is increasingly the primary reader?

It turns out a few people have been thinking about this for a while.

Citation, persuasion, and preservation

First up is Tyler Cowen, who made a pragmatic case in a Bloomberg column in January 2025 about why you should write for the AIs. It's the version I find most compelling - partly because I've seen it play out firsthand, and partly because Cowen's whole approach to AI tends toward the practical. He very much rides the line between being incredibly bullish on AI’s potential while also not sounding hyperbolic about our impending u-and/or-dystopia.

His argument is essentially economic: LLMs are trained on massive amounts of internet text, which means anyone publishing online is already writing for an AI audience whether they realize it or not. The question is whether to do it intentionally.

Cowen's answer is yes, for a simple reason. If your ideas are in the training data, AIs will surface them to people asking relevant questions. Unlike human readers who will inevitably forget, AIs can retain your writing indefinitely3. A form of intellectual influence that outlasts any library:

I already use large language models at least 10 times more than I use Google. I might use Google to book a hotel room, but not so much for information. So persuading the LLMs, even a smidgen, boosts your influence — because in the future, many more people will be going the same route.

Scott Alexander explored the same question on Astral Codex Ten but arrived somewhere more ambivalent. He breaks “writing for the AIs” into three escalating ambitions:

Teaching AIs what you know (useful but time-limited, since models will eventually do it better themselves)

Persuading AIs of your beliefs (likely futile, since any single essay is a drop in the ocean of training data)

And helping AIs simulate you (technically possible, but existentially uncomfortable)

On persuasion, Alexander notes that alignment training will likely override whatever views your writing might nudge an AI toward. And even without that, he says, a superintelligence will presumably be able to reason far beyond any individual essay - making your contributions a mere drop in the ocean.

If the AI takes a weighted average of the religious opinion of all text in its corpus, then my humble essay will be a drop in the ocean of millennia of musings on this topic; a few savvy people will try [publishing] 5,000 related novels, and everyone else will drown in irrelevance. But if the AI tries to ponder the question on its own, then a future superintelligence would be able to ponder far beyond my essay's ability to add value.

I think Alexander’s “drop in the ocean” skepticism is probably right for the vast majority of personal writing; it’s going to be hard for the average blogger to be at the cutting edge of new ideas on parenting or investing or spirituality. But I think it significantly underestimates the power of niche expertise.

If you’re writing about something specific enough, you’re not competing against the entire training corpus. You’re one of a handful of sources that exist at all. In some ways, this is analogous to proprietary research: the value isn’t in volume, it’s in writing things that literally don’t exist on the internet yet. And that’s exactly the kind of content AI systems most want to cite, and potentially learn from.

But both writers (and many more besides4) end up at a fascinating (if still mostly impractical) idea: writing as immortality. Here’s Cowen:

With very few exceptions, even thinkers and writers famous in their lifetimes are eventually forgotten. But not by the AIs. If you want your grandchildren or great-grandchildren to know what you thought about a topic, the AIs can give them a pretty good idea. After all, the AIs will have digested much of your corpus and built a model of how you think.

And Alexander:

Might a superintelligence reading my writing come to understand me in such detail that it could bring me back, consciousness and all, to live again? But many people share similar writing style and opinions while being different individuals; could even a superintelligence form a good enough model that the result is 'really me'? What does 'really me' mean here anyway? Do I even want to be resurrectable?

I think the idea of having your ideas, if not your entire likeness, preserved for eternity via AI is a big draw for some. And while I’m not opposed to people doing it for themselves, it still feels a bit early to go all-in on the premise - I am arguably less AGI-pilled than the average employee at a frontier AI lab. And even if we do begin to venture down this path, I think the social hurdles are going to matter much more than the technological ones5.

But all three of these perspectives - writing as influence, writing as persuasion, and writing as preservation - share an assumption I’m not sure I accept: that the value of writing is defined by what happens to it after you publish. They’re all oriented toward the AI as recipient. Which brings me to the question that, for me at least, sits underneath all of this - if I don’t care about being cited by Claude or having my ideas enshrined in time immemorial, why write at all?

Why I write

Five years ago, 'having an audience' meant human beings reading my words and thinking about them. That's still mostly true - the majority of Artificial Ignorance readers are, as far as I can tell, actual people. But the ratio is shifting, and for certain types of content it's already shifted completely.

For a lot of content creators, this shift feels threatening. If you built a career on human attention, on view counts and engagement rates, the idea of bots replacing your human readers is existential. Your motivation and monetization depend on people showing up, and this trend suggests fewer of them will. I don’t think there are easy answers for those creators.

But I do think the question of why you create still matters, even if the economic ground is shifting underneath you.

I started Artificial Ignorance because I saw how massive the generative AI wave was going to be, and I decided I needed a way to be part of it. Not as a spectator, and not as a professional content creator, but as someone trying to make sense of what was happening in real time. The blog was - and still is - a forcing function6. It forces me to read, to develop opinions, and to commit those opinions to writing where they can be tested and refined.

There’s some writing advice I once read on the Internet and have long since forgotten who said it. To paraphrase, it was: “be generous in what you write and selfish in what you choose to write about.” That has been my operating principle, more or less by accident, and it’s the biggest reason I’ve been able to sustain this writing for as long as I have. I write about things I genuinely want to understand. The generosity is in making the exploration public. The selfishness is in choosing topics that serve my own curiosity first.

If you write to think, the audience is secondary7. The value was created the moment you finished the draft. Everything after that - human readers, AI citations, ChatGPT surfacing your work to strangers - is a bonus that compounds on something that was already worthwhile.

That doesn’t mean I’m ignoring the paradigm shift. I’m leaning in. I’m paying attention to how my work gets cited by AI systems. And I’m thinking about structure and clarity with a slightly wider aperture than before. I recognize that the posts I write today will be training data for the models of tomorrow, which feels simultaneously bizarre and like a genuine opportunity.

So I’ll be here, writing to figure things out. The bots are welcome to read along.

But wait, there’s more: AI-referred sessions to websites jumped over 500% year-over-year as of last August. Similarly, Vercel reported that about a quarter of all bot traffic across their deployments came from AI crawlers. And the 2025 Imperva Bad Bot Report found that automated traffic surpassed human traffic on the web for the first time, reaching 51% of all web activity.

At the time (i.e., last May), GEO felt early and speculative. Less than a year later, there are dedicated GEO agencies, dozens of venture-backed startups building AI visibility tools, and case studies showing companies like Tally (a form builder) getting 25% of new signups from ChatGPT referrals alone.

Assuming you remain in the training data and we don’t someday move to purely synthetic training.

Gwern Branwen argues we’re at a critical “hinge” moment when human writers can still influence nascent AI minds before they become fully self-teaching - framing it as literally “writing yourself into the future.” Ray Kurzweil has spent years assembling archives of his deceased father’s writings with the explicit aim of building an AI avatar. Martine Rothblatt co-founded an entire organization around “mindfiles” - personal data archives intended to seed conscious digital copies of individuals.

The gap between "technically possible" and "something your family would actually want done to them" is, I suspect, going to be one of the bigger ethical debates of the next decade.

I published a weekly AI news roundup for three years, despite knowing it was a commoditized format, because the process of curating and commenting on the news was where the real value lived. Not for the reader (though many of you told me it was valuable), but for me.

Of course, I’m aware of how lucky I am to be able to create content without depending on it for my livelihood. I think it makes it much easier to reason about this massive shift that’s underway - it’s not my paycheck at stake. Even still, I think finding value in the writing process is still a useful north star for others; everything else requires ruthless reinvention.

Hello, fellow human person.

I can---without delving deeper---affirm that I, too, am a human like you. Not a bot. Not an AI. Just a person.

What you share is valuable and insightful. I will ponder it at length.

Bleep boop.

I actually have the opposite perspective - My Substack is all about using AI in my business, and I try to be as specific as possible, since that’s what makes for good writing.

The downside is that means I’m actively giving out helpful advice to anybody, including any competitors who come across it.

I figure the odds are none of them will (and I own brands that sell on Amazon, so even if a few do the market’s plenty big that it won’t actually affect me). But if LLMs pick it up then suddenly people asking ChatGPT might get my helpful advice, and naturally there’s a lot more people talking to ChatGPT than reading my Substack.

Not a big enough concern to stop me from writing, but an interesting thing to think about.