Tutorial: How to make and share custom GPTs

They're not going to disrupt everything (yet), but they're a ton of fun.

After OpenAI's slew of announcements at DevDay on Monday, I feel like a kid in a candy store. There are plenty of shiny new toys to play with, but I thought GPTs were the most interesting to dive into first - for two reasons.

One, the branding and positioning on these is super confusing, and I thought folks could use some clarification on how GPTs, plugins, and tools all fit together. Two, you don't need to code to make a GPT! So they're a perfect tutorial for technical and non-technical readers alike.

Let's look at what GPTs are, how they're different from plugins, what it takes to build one, and how you can publish them to the GPT Store.

What's a GPT?

As of 72 hours ago, anyone using the term "GPT" meant one of two things:

A "Generative Pre-Trained Transformer" model, a.k.a. the underlying language model for many of OpenAI's products. OpenAI coined the term GPT in a research paper in 2018, and these models are the basis for ChatGPT's variants, such as GPT-3.5 or GPT-4.

An AI product, unaffiliated with OpenAI, that uses a large language model in some way. Examples include (but are not limited to): AutoGPT, SlidesGPT, RoomGPT, ChartGPT, StockGPT, and GirlfriendGPT.

Now, there's a third definition:

A custom version of ChatGPT that combines instructions, extra knowledge, and any combination of skills.

GPTs are pre-packaged versions of ChatGPT, with a few customizations and goodies bundled together into a bot-like output:

Name

Description

Instructions

Conversation starters

Knowledge files

Capabilities/Tools

Actions

As we'll see, a GPT is “just” ChatGPT with a custom prompt and settings, access to some new features, and a dash of branding. Like GPT-4, it can use DALL-E 3, web browsing, and code interpreter behind the scenes if needed. And like plugins, it can hook into third-party services and data. But it bundles all of these settings into something vaguely resembling an app, or a persona.

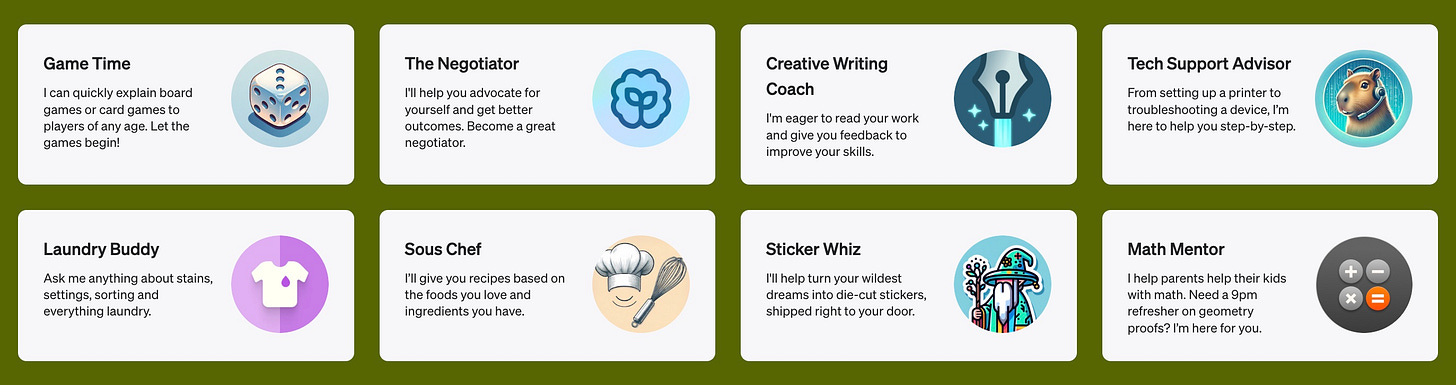

If that's confusing - you're not alone! It's going to take a while for people to fully get what GPTs are and what they're useful for. But OpenAI has a number of GPTs available out of the box, including a creative writing coach, a coloring book generator, a negotiation partner, and a recipe builder.

These use cases are meant to be much more narrow than ChatGPT as a whole, and users are meant to swap between GPTs depending on the task at hand - rather than wrangling ChatGPT to get into the right frame of mind. If you've seen my prompting tips, this is trying to bundle all of those directly into the system upfront, instead of making the user type them in.

How are GPTs different from plugins?

GPTs can best be thought of as the evolution of plugins. Before, you could create a plugin for ChatGPT that had access to APIs and outside data. But installing them was clunky, you could only enable 3 at a time, and you couldn't use them alongside web browsing or code interpreter. While there were some successful ones ("chat with your PDF" plugins, for example) - ChatGPT plugins didn't have the same product-market fit as the rest of the product.

People thought they wanted their apps to be inside ChatGPT, but what they really wanted was ChatGPT in their apps.

– Sam Altman (paraphrased)

With GPTs, we see some of the same ideas but with more capability. Instead of limiting yourself to a few plugins within the usual ChatGPT interface, you choose a GPT at the start of your conversation that has access to the tools you need. Just want to talk? ChatGPT. Need data on real estate in your area? ZillowGPT. Trying to design a marketing flyer? CanvaGPT.

GPTs can do almost everything plugins can do - call external services and work with files. In fact, there's an automatic way to migrate an existing plugin to a GPT. My expectation is that plugins will be phased out in favor of GPTs and the GPT Store.

What's the GPT Store?

The GPT Store is OpenAI's version of an App Store. It's expected to launch later this month, and when it does users will be able to browse and save publicly available GPTs. There's also expected to be a revenue-sharing component for the most popular GPT authors. Presumably, that means that GPTs will only be available to ChatGPT Plus subscribers moving forward, but there aren't a lot of details on that front.