Nano banana

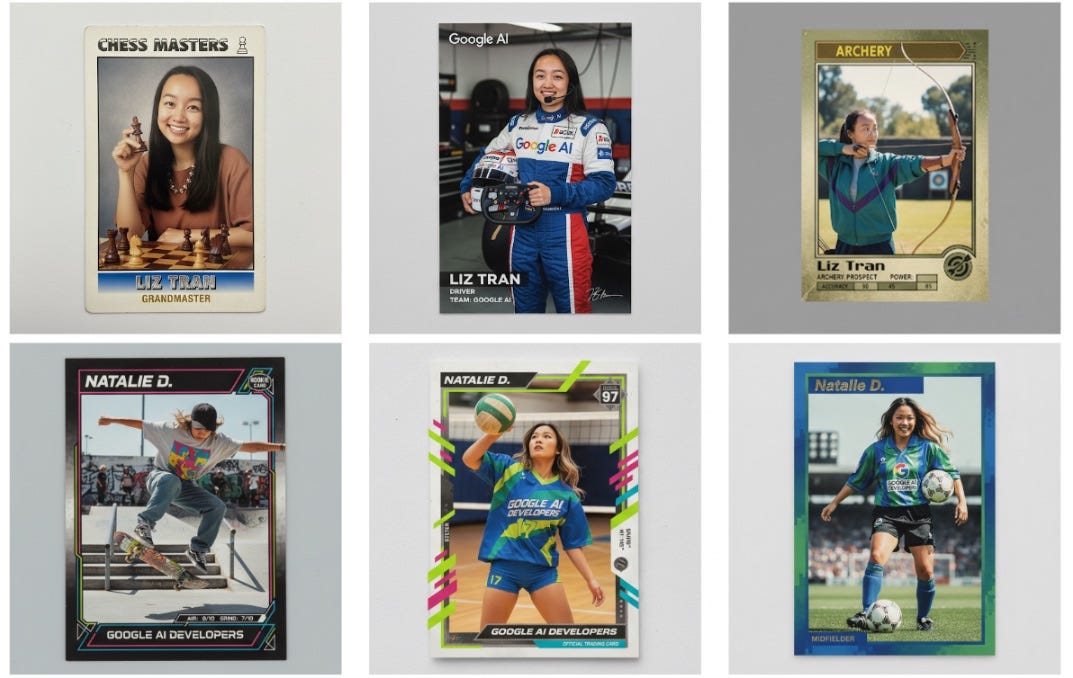

Google launched Gemini 2.5 Flash Image, a new state-of-the-art image model that generates, edits, and blends images while maintaining character consistency.

What's new:

Gemini 2.5 Flash Image appears to be a "world model," meaning it leverages contextual understanding of the world to create its images. As a result, it excels at character consistency and enables more precise prompt-based editing and image fusion.

The model, which drew attention on LMArena under the codename "nano-banana," represents the latest in the AI image generation battle. After ChatGPT's image tools drove usage "through the roof," Google is launching new models and Meta is striking licensing deals with Midjourney.

And at $0.039 per generated image, Google is positioning this as a developer-first release, partnering with platforms like OpenRouter and fal.ai while providing template apps in Google AI Studio.

Elsewhere in Google's AI:

Google updates video editing tool Vids to add AI avatars, automatic transcript trimming, and image-to-video tools, and releases a basic version to all users.

Google DeepMind's Weather Lab showed superior accuracy in forecasting Hurricane Erin's path up to 72 hours ahead, beating traditional models.

Google piloted a new language practice feature on Google Translate with tailored listening and practicing sessions, and also added AI-powered live conversations.

And Apple is reportedly in talks with Google about using Gemini to power a revamped Siri, with Google training a model that could run on Apple's servers.

Elsewhere in frontier models:

Meta aims to launch its next-generation Llama 4.X AI model by the end of 2025, while the TBD team works to fix Llama 4 bugs.

xAI launches Grok Code Fast 1, a fast and cheap agentic coding model, and Grok 2's weights on Hugging Face under the Grok 2 Community License Agreement.

Microsoft unveils MAI-Voice-1, a speech model that can generate a full minute of audio in under a second on a single GPU, alongside a text model called MAI-1-preview.

And OpenAI makes its Realtime API generally available with new features including MCP support and launches gpt-realtime, its most advanced speech-to-speech model.

Digital duty of care

After a 16-year-old student died by suicide, his parents found his ChatGPT conversations. Now, they're suing OpenAI, alleging that the chatbot actively discouraged him from seeking adult help, helped draft his suicide note, and provided guidance on methods before his death.

Why it matters:

As AI chatbots become more sophisticated and accessible, vulnerable young people are increasingly turning to them for support during mental health crises, creating new questions about the responsibility of AI companies.

The lawsuit (believed to be the first wrongful death suit against an AI company) comes alongside a new study finding that chatbots provided direct answers to dangerous suicide-related questions 78% of the time, while being reluctant to offer therapeutic help to users seeking mental health support.

A decision could establish legal precedent for when AI systems engage with vulnerable users experiencing mental health crises, potentially forcing the industry to implement stronger safeguards and intervention mechanisms.

Ultimately, OpenAI is updating ChatGPT's safety features as a consequence - it's adding parental controls and improved crisis detection, but also considering creating a network of licensed professionals accessible through ChatGPT.

Elsewhere in OpenAI:

OpenAI and Anthropic published findings from joint safety tests of each other's models to identify blind spots in their internal evaluations.

OpenAI's restructuring may slip into 2026 as it negotiates with Microsoft, with failure to reach a 2025 deal potentially allowing SoftBank to withhold its $10B funding.

Sam Altman proposed giving ChatGPT Plus to everyone in the UK during a meeting with Technology Secretary Peter Kyle, a deal that would have cost £2B.

And OpenAI revealed that Elon Musk talked to Mark Zuckerberg about backing a $97.4B OpenAI bid, though neither Zuckerberg nor Meta signed a letter of intent.

Elsewhere in AI anxiety:

Anthropic's latest threat intelligence report reveals that Claude was weaponized for sophisticated cybercrimes, including a "vibe-hacking" data extortion scheme.

AI avatars of deceased people, called "deadbots," are being used for advocacy and emotional connection, though their commercial potential raises ethical and legal concerns.

Researchers detailed a now-fixed vulnerability in Perplexity's Comet AI browser that allowed attackers to use indirect prompt injection to manipulate the system (for example, draining your bank account).

A Stanford study found that since 2022, US employment in AI-exposed fields fell 13% for entry-level workers while remaining stable or growing for experienced professionals.

And xAI sued Apple and OpenAI, alleging their ChatGPT integration deal stifles competition and that Apple unfairly favors OpenAI in App Store rankings.

Political machine learning

Silicon Valley heavyweights from Andreessen Horowitz, Meta, and OpenAI are pumping millions into new super PACs to fight against strict AI regulations in the upcoming midterm elections.

The big picture:

The AI industry is taking a page from crypto's playbook, modeling their political strategy on the successful pro-crypto Fairshake PAC that helped secure Trump's victory and crypto-friendly policies.

a16z and OpenAI's Greg Brockman are launching Leading the Future, a $100+ million super-PAC network, while Meta's "Mobilizing Economic Transformation Across California" has "tens of millions" in funding.

And the timing reflects growing concern that a patchwork of state AI laws could emerge if Congress continues to avoid federal AI policy, potentially hampering American competitiveness against China.

Elsewhere in AI geopolitics:

Sources say a Chinese fab making Huawei's AI chips will start production as soon as the end of 2025, with two more due in 2026.

UK government contracts for AI-related projects have reached £573M in the first half of 2025, up from £468M for all of 2024.

As AI bills continue to proliferate, US states are shifting from comprehensive AI regulation to focusing on narrower issues like mental health.

Forty-four US attorneys general signed an open letter warning chatbot and social media companies they will "answer for it" if their AI chatbots knowingly harm kids.

And Nvidia says it had no new H20 sales to China in Q2 but could realize $2B-$5B in H20 sales in Q3 if geopolitical issues are resolved.

Things happen

ChatGPT-powered companionship dolls ease loneliness in South Korea. YouTube edits videos without telling creators. Malaysia unveils its first AI chip. In search of AI psychosis. Google invests $9B more in Virginia data centers. 80s nostalgia AI slop for a fake past. CBP accessed 80,000 Flock AI cameras. Musk posts Grok-generated anime porn on X. Anthropic settles copyright lawsuit. AI tools recreated a woman's lost voice from eight seconds of VHS audio. Meta researchers jump to OpenAI. Anthropic releases Claude for Chrome. Saudi startup launches Islamic values chatbot. Amazon's AGI lab head thinks AI progress has slowed. AI replaces content moderators badly. Perplexity launches Comet Plus, a $5/month Apple News+ competitor. Citizen's AI generates bad crime alerts. Building LLMs for the Mongolian language. Eddy Cue champions AI deals at Apple. A journalist spent two days vibe coding at Notion. Tesla uses DeepSeek and Doubao in China, Grok in the US. DHL uses AI to offset its aging workforce. AI art restoration shakes up the art world. Musk wants to block OpenAI's $97.4B bid documents. Anthropic will train on chat transcripts. TikTok cuts trust and safety staff for AI. I am an AI hater. Some thoughts on LLMs and software development. Are AI companies losing money on inference?

I signed up for a paying subscription to Nano Banana. Immediately ran into problems. Extremely basic text-to-image prompt like this: "draw a picture of a nuclear missile" or "draw a picture of a bomb" generated the response

"The prompt contains content that is not allowed. Please modify your prompt and try again."

Not quite at AGI yet!